When universities manage student records, most rely on legacy platforms built decades ago that now serve over 80% of UK higher education. These systems cost millions to implement and require dedicated teams to maintain. Having experienced painful implementations of such systems myself, I wanted to know if I could build a viable alternative armed with AI coding tools.

The Experiment – Understanding the Technology as a Non-Expert

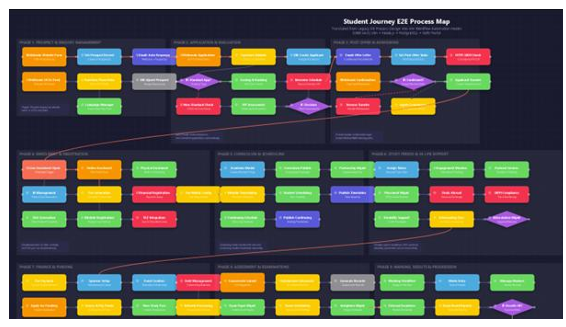

Over the past month, I built SJMS — the Student Journey Management System — a full-featured student information system designed from the ground up using Claude Code, Perplexity Computer, TypeScript, Docker, MINIIO, React, PostgreSQL, and Prisma ORM. Tools that the AI code manages for you. The goal wasn’t just a prototype. I wanted HERM compliance (the global Higher Education Reference Model used by 1,000+ institutions), HESA data standards, and proper multi-persona security — the same requirements any UK university would demand.

The result: 298 database models across 23 domains, four distinct user portals (Admin, Student, Academic, Applicant), Keycloak SSO, and an Agile build methodology comparable with commercial products.

The AI-Powered Virtual Team

The revelation was how AI tools transformed solo development. Perplexity and Claude Code acted as my senior developers — given structured prompts with role assignments, branch naming conventions, and explicit stop conditions, it produced production-quality code across complex feature sets.

Cursor BugBot and Github Copilot delivered automated reviews and testing and Perplexity also served the role of technical architect, analysing each new section of build and identifying strategic risks before they became production incidents. This “virtual team” approach let me ship GitHub pull requests (changes) in rapid succession, each building on the last. But it required something I didn’t expect: more process discipline than working with human developers, not less.

The Hard Lessons: Security is never free.

The problem I had was with a recurring data leak pattern. When students queried certain endpoints, they could see ALL records — not just their own. The vulnerability had three layers: middleware injected scope parameters, validation stripped them, and the database query ignored them entirely. This pattern appeared on every endpoint I audited. The lesson I learned from this is that security must be enforced at the data access layer, not in middleware.

Authentication is a productivity black hole.

Keycloak integration consumed disproportionate development time. SSO configuration issues and session management bugs repeatedly stalled feature development. If I started again, I’d build the entire application with simple JWT auth first and swap in enterprise SSO last.

AI agents need guardrails.

Claude Code sometimes entered fix loops — each “fix” creating a new error. It sometimes claimed features existed that didn’t. I needed explicit verification commands in every prompt, mandatory output reporting, and human-in-the-loop browser testing after each build phase.

Version proliferation kills momentum.

I iterated through eight named versions before stabilising. Each architectural pivot consumed days of refactoring. The lesson: commit to one architecture early, prove it works end-to-end, then enhance incrementally. Perfectionism in architecture is the enemy of delivery, no matter what approach you use to build the product.

What Actually Works: The Plan-Build-Test-Verify cycle is the best approach.

The model I developed was to plan using my detailed documentation from previous transformation programmes, aligned with student journey maps and the HERM requirements. This would provide the basis for developing build prompts with Claude Code (structured prompts, explicit roles, stop conditions). After each build cycle I would test with automated tools (BugBot PR review, TypeScript strict compilation) and verify with both Virtual Subject Matter Expert’s and me as the human in the loop with browser testing (Comet smoke tests with categorised findings). I would not advance to the next phase without all three of these test approaches passing. This methodology caught three critical data leaks, fourteen UX issues, and a schema drift problem that would have corrupted three connected services — all before any user touched the system.

Can the available AI tools build an enterprise level Student Journey Management System ?

Yes — with caveats. The modern stack is more than adequate. AI tools genuinely multiply capability. But the 23-domain, 298-model system I built needs the same testing, security, and maintenance discipline as any enterprise platform. The code writes faster with AI; the thinking doesn’t. The commercial Student System market charges millions because domain complexity — not technical complexity — is the real barrier.

Understanding HESA reporting, HECOS classifications, and the regulatory landscape of HE takes more time than writing the code that implements them.

We’d love to hear your feedback on our journey, please reach out to use here.